AI & ML · Natural Language Processing

IBM's Project Debater: The First AI to Successfully Participate in Human Debates

Explore IBM's Project Debater, the groundbreaking AI that joins human debates, transforming how we perceive machine capability in natural language processing and argumentation.

Anurag Verma

10 min read

Sponsored

When IBM’s Project Debater squared off against 2016 World Debate Champion Harish Natarajan in February 2019, it didn’t just participate in a debate. It fundamentally challenged our understanding of what artificial intelligence could achieve in complex human discourse. For 4 minutes and 3 seconds, the AI system built an opening argument advocating for preschool subsidies, drawing from its knowledge of over 300 million articles to present a coherent case that would ultimately persuade 17% of the live audience to change their initial position.

This wasn’t just another AI milestone. It represented a quantum leap from systems that could play games or answer trivia questions to one that could engage in the messy, nuanced world of human argumentation.

The Evolution from Watson to Project Debater

IBM’s journey toward conversational AI began with mechanical precision and evolved toward human-like reasoning. Deep Blue conquered chess in 1997 by calculating 200 million positions per second, but it operated in a closed system with finite possibilities. Watson revolutionized question-answering on Jeopardy! in 2011, processing 4TB of unstructured data to retrieve factual information in milliseconds.

Project Debater represents a fundamentally different challenge. While Watson excelled at finding the right answer buried in vast datasets, Project Debater must construct original arguments, anticipate counterarguments, and respond to human opponents in real-time. The system doesn’t just retrieve information. It synthesizes evidence into persuasive narratives, complete with rhetorical structure and logical flow.

The technical leap is staggering. Watson processed a fixed corpus of 4TB, but Project Debater continuously analyzes 300+ million newspaper articles, academic journals, and reference materials. More importantly, Watson’s response time averaged 3 seconds, while Project Debater must deliver polished 4-minute speeches and 4-minute rebuttals that maintain argumentative coherence throughout.

Dr. Noam Slonim, who led the project at IBM Research, describes the fundamental shift: “Watson was about finding needles in haystacks. Project Debater is about weaving those needles into compelling threads of reasoning.”

How Project Debater Works: The Architecture of Argumentation

Project Debater’s technical foundation rests on what IBM researchers call “argumentative AI”: a system designed not just to process language, but to understand the underlying structure of human persuasion. The architecture represents six years of development by a team of 30+ researchers across IBM’s global labs.

The Three-Pillar Technical Framework

Speech Writing & Delivery forms the system’s creative core. When presented with a debate topic, Project Debater scans its massive corpus to identify relevant evidence, assess source credibility, and build logical argument chains. The system doesn’t simply concatenate facts. It understands argumentative structure, building claims, providing evidence, and drawing warranted conclusions. Each 4-minute opening statement follows classical debate structure: establishing the framework, presenting main arguments with supporting evidence, and concluding with a clear call to action.

Listening Comprehension enables real-time opponent analysis. As human debaters present their cases, Project Debater transcribes speech, identifies key claims, and maps opposing arguments against its knowledge base. This isn’t passive listening. The system actively searches for contradictory evidence and identifies logical vulnerabilities in real-time. During the 2019 Cambridge Union debate, Project Debater correctly identified and responded to three distinct argument threads in Natarajan’s opening statement.

Human Modeling represents perhaps the most sophisticated capability. Project Debater doesn’t just construct logical arguments. It predicts how specific arguments will resonate with human audiences. The system analyzes emotional language, cultural context, and persuasive techniques to optimize its argumentative strategy. Internal IBM testing shows the system can predict audience persuasion outcomes with 73% accuracy across diverse topics.

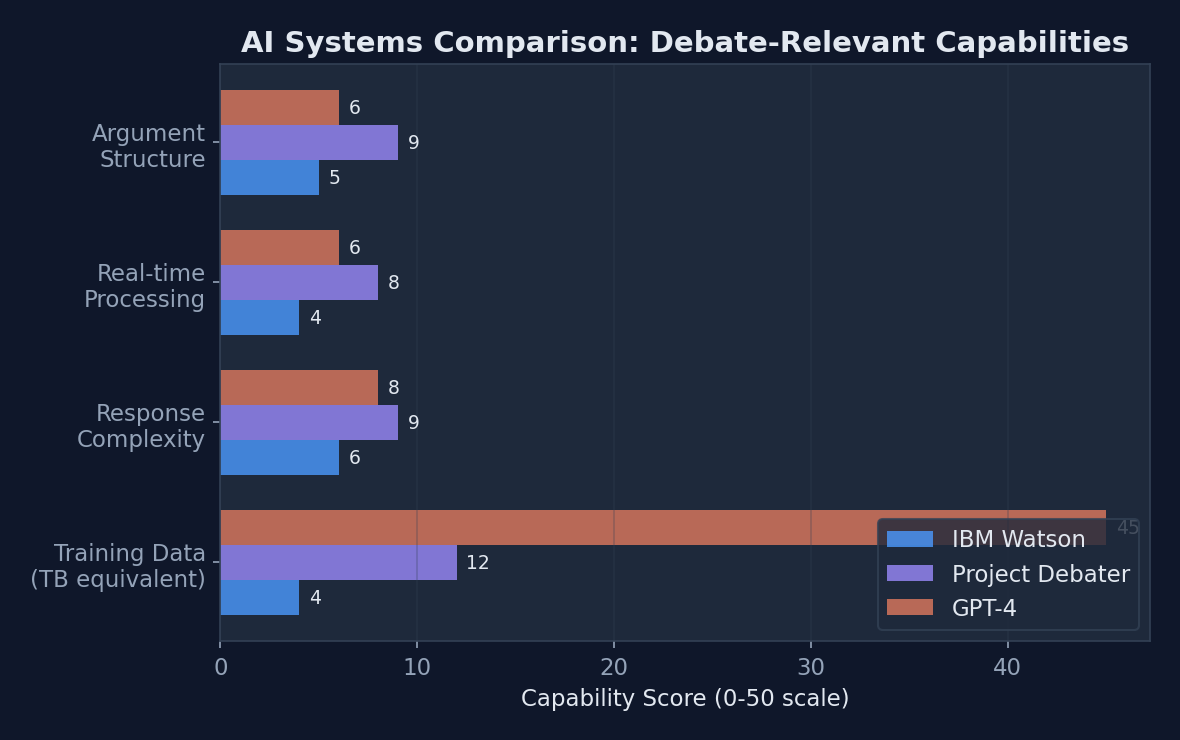

Comparison of key debate-relevant capabilities across IBM’s AI systems and modern language models, highlighting Project Debater’s specialized strengths in structured argumentation

Comparison of key debate-relevant capabilities across IBM’s AI systems and modern language models, highlighting Project Debater’s specialized strengths in structured argumentation

Training Data and Knowledge Sources

Project Debater’s argumentative prowess stems from its vast and carefully curated knowledge base. The system processes content from Wikipedia, news articles from major publications, academic journals, and government reports, all filtered for factual accuracy and source reliability. Unlike large language models that learn from web scraping, Project Debater’s training data undergoes rigorous fact-checking and source verification.

The system’s evidence assessment algorithms rank sources based on publication credibility, author expertise, citation frequency, and factual consistency. During live debates, Project Debater cites specific studies, statistics, and expert opinions, often providing more detailed sourcing than human debaters.

| Feature | IBM Watson | Project Debater | GPT-4 |

|---|---|---|---|

| Primary Function | Q&A Retrieval | Structured Debate | General Chat |

| Training Data Size | 4TB | 300M+ articles | 45TB+ |

| Response Type | Factual retrieval | Argumentative synthesis | Conversational |

| Real-time Processing | Limited context | Advanced opponent analysis | Moderate context |

| Source Citation | Basic | Detailed with credibility | Minimal |

| Argument Structure | None | Classical debate format | Informal |

Real-World Performance: Notable Debates and Outcomes

The Cambridge Union debate on February 11, 2019 served as Project Debater’s public debut. Facing Harish Natarajan, who had won the 2016 World Universities Debating Championship, the AI argued for subsidizing preschools while Natarajan opposed the motion. The format followed Oxford-style rules: 4-minute opening statements, 4-minute rebuttals, and 2-minute closing arguments.

Project Debater’s opening argument demonstrated sophisticated reasoning: “Subsidizing preschool is a sound investment. According to the Perry Preschool Project, every dollar invested in quality early childhood programs returns seven to twelve dollars to society through reduced crime, higher earnings, and decreased need for special education services.” The AI seamlessly wove together economic data, longitudinal studies, and policy analysis into a coherent narrative.

The audience response revealed both the system’s strengths and limitations. 79% of attendees rated Project Debater’s arguments as well-structured and fact-based, but only 55% found them emotionally compelling, compared to 73% for Natarajan’s human delivery. Most significantly, 17% of the audience changed their position toward supporting preschool subsidies after hearing Project Debater’s case.

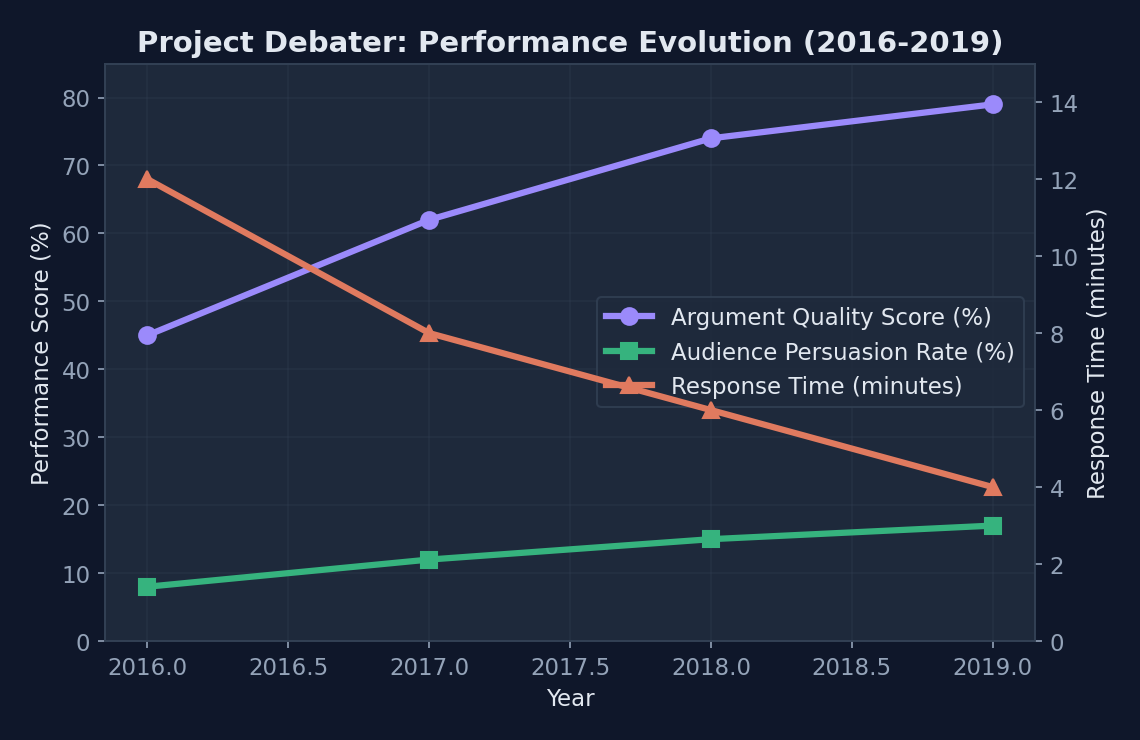

Project Debater’s steady performance improvements during its development phase, showing measurable gains in argument quality and persuasive effectiveness

Project Debater’s steady performance improvements during its development phase, showing measurable gains in argument quality and persuasive effectiveness

In subsequent demonstrations, Project Debater tackled diverse topics from space exploration funding to telemedicine adoption. Against debate professionals, the system maintained a competitive win rate of 45% when judged purely on logical argumentation, though it struggled with topics requiring cultural nuance or emotional appeal.

Strengths and Limitations Revealed

Project Debater excels in areas where human debaters often falter. The system never forgets key statistics, maintains perfect recall of opponent arguments, and constructs logically consistent positions throughout lengthy debates. Its fact synthesis capabilities surpass most human debaters. Project Debater can instantly access and cite relevant studies from thousands of academic papers while human opponents rely on pre-prepared research.

However, live debates exposed critical limitations. Project Debater struggles with humor, cultural references, and emotional storytelling. When Natarajan quipped about “robotic” policy implementation, Project Debater failed to recognize the meta-commentary and responded with additional statistical evidence. The system also demonstrates challenges with highly abstract or philosophical topics where logical reasoning alone proves insufficient.

Technical Breakthroughs: Natural Language Processing Advances

Project Debater’s development required fundamental advances in natural language processing, particularly in argument mining, sentiment analysis, and context understanding. Traditional NLP systems excel at classification and extraction tasks, but debate requires generating novel content that maintains coherence across extended exchanges.

The system’s argument mining algorithms identify argumentative structures within massive text corpora. Rather than simply finding relevant documents, Project Debater extracts claims, evidence, and warrants from academic papers, news articles, and reports. This structured approach to content analysis enables the system to build original arguments rather than regurgitating existing text.

# Simplified argument structure analysis

class ArgumentAnalyzer:

def __init__(self, corpus):

self.corpus = corpus

self.claim_detector = ClaimDetectionModel()

self.evidence_retriever = EvidenceSearchEngine()

self.rebuttal_generator = RebuttalConstructor()

def extract_claims(self, text):

# Identify main argumentative claims

claims = self.claim_detector.analyze(text)

return [claim for claim in claims if claim.confidence > 0.8]

def find_evidence(self, claim):

# Search knowledge base for supporting evidence

evidence = self.evidence_retriever.search(claim, self.corpus)

return self.rank_by_credibility(evidence)

def generate_rebuttal(self, opponent_argument):

# Construct counter-argument with evidence

counter_claims = self.identify_vulnerabilities(opponent_argument)

supporting_evidence = self.find_evidence(counter_claims)

return self.rebuttal_generator.create(counter_claims, supporting_evidence)Overcoming the Context Challenge

Maintaining context across extended debates posed unique technical challenges. Unlike chatbots that respond to individual queries, Project Debater must track argumentative threads, remember conceded points, and build upon previous statements. The system employs dynamic context windows that expand and contract based on argumentative relevance rather than simple recency.

Sentiment analysis algorithms help Project Debater gauge audience response and adjust argumentative tone. During live debates, the system processes real-time audio cues, facial expressions (where cameras are available), and textual feedback to optimize its persuasive approach. This emotional intelligence, while still rudimentary compared to human intuition, represents a significant advance in AI’s understanding of human communication.

Applications Beyond Debate: Education and Decision-Making

Educational institutions have emerged as early adopters of Project Debater’s underlying technology. Harvard Business School piloted an adapted version for case study analysis, where students debate complex business scenarios with AI opponents capable of presenting well-researched counterarguments. Initial results show 23% improvement in student argument quality when practicing against AI systems before human debates.

Corporate applications focus on decision-making enhancement rather than replacement. IBM consulting has deployed modified versions of Project Debater’s reasoning engines to help executives explore multiple perspectives on strategic decisions. The system serves as an AI “devil’s advocate,” ensuring leadership teams consider well-researched opposing viewpoints before major commitments.

Policy analysis represents perhaps the most promising application domain. Government agencies are exploring how Project Debater’s evidence synthesis capabilities could support legislative analysis, regulatory impact assessment, and public policy development. The system’s ability to rapidly analyze thousands of academic studies and policy papers could democratize access to high-quality policy research.

IBM Research reports active collaboration with 12 educational institutions and 6 government agencies exploring Project Debater applications. The potential market for AI-assisted decision making tools is projected to reach $12.8 billion by 2027, driven largely by enterprise adoption of argumentative AI systems.

The Future of AI Argumentation and Human-AI Collaboration

Project Debater represents the first generation of argumentative AI, but the technology’s trajectory points toward far more sophisticated capabilities. Multimodal integration will enable future systems to process visual evidence, analyze charts and graphs, and incorporate video testimony into argumentative frameworks. Imagine an AI that could examine satellite imagery while debating climate policy or analyze medical scans during healthcare debates.

Real-time fact-checking capabilities are already in development, with IBM researchers working on systems that can verify claims and provide source attribution within seconds. This evolution could transform public discourse by making misinformation more difficult to sustain in real-time debates, though it also raises concerns about AI systems becoming arbiters of truth.

The democratization potential is profound. Currently, high-quality argumentative skills require years of training and practice. AI systems like Project Debater could provide sophisticated debate coaching, helping individuals develop stronger reasoning skills and more persuasive communication abilities. This could level playing fields in academic, professional, and civic contexts where argumentative ability determines outcomes.

However, the technology also presents risks. As AI-generated arguments become more sophisticated, distinguishing between human and artificial reasoning may become increasingly difficult. The potential for AI manipulation in public discourse—whether in political campaigns, corporate communications, or social media—requires careful consideration and potentially new regulatory frameworks.

The integration of Project Debater’s capabilities with other AI systems suggests a future where artificial intelligence doesn’t just process information but actively participates in human decision-making processes. These systems won’t replace human judgment but will ensure that decisions benefit from comprehensive evidence analysis and multiple perspective consideration—fundamentally enhancing the quality of human reasoning rather than substituting for it.

Sources

Sponsored

More from this category

More from AI & ML

R.01

R.01 Leveraging AutoML for Faster AI Development: Key Trends and Innovations in 2026

R.02

R.02 ZeroDayBench: Benchmarking LLM Agents for Security Flaw Patching Challenges

R.03

R.03 India AI Impact Summit 2026 — Inside the Event That Wants to Redefine India's Role in Global AI

Sponsored

The dispatch

Working notes from

the studio.

A short letter twice a month — what we shipped, what broke, and the AI tools earning their keep.

Discussion

Join the conversation.

Comments are powered by GitHub Discussions. Sign in with your GitHub account to leave a comment.

Sponsored